Basic QC Practices

When it is time to wean yourself off warning rules?

More than 2,000 laboratory professionals worldwide registered for the Warning on Warning Rules webinar on February 13, 2025. Several hundred more have watched the on-demand recording since. The topic seems to have struck a nerve.

When is it time to Wean yourself off Warning Rules?

Sten Westgard, MS

Sten Westgard, MS

March 2025

Inspired by the Warning on Warning Rules webinar (available on-demand here).

I was recently in conversation with someone who strongly believes in warning rules. In his high volume laboratory, on any given day, he runs over 900 controls, with around 50 warning rule flags cropping up at the beginning of a shift, and another 50 warning rule flags at the end of the same shift. Approximately 100 alarms a day (remember 2 controls and 1:2s limits is expected to generate a 9% false rejection rate, so his experience matches the expectation). But that did not bother him, he has a staff of 5 technologists, so each one only has to review 20 alarms a day.

So that’s 700 warnings a week, or about 36,400 warnings every year. Each technologist on staff will review about 7,280 additional warnings every year.

Does this sound like a sound strategy to you? Even if the review takes moments, the time and effort and cost will still add up.

Let’s translate this approach to a different scenario. Say in your laboratory and hospital and health system, you have around 900 smoke detectors spread out across the facility. If 100 smoke detectors sounded an alarm every day, would you consider that acceptable? It will only take a moment of your time to check if there’s a real fire, and then you can reset the detector.

What if you got an extra 20 emails every day for a year? All of them junk, of course. But all you have to do is review the subject line, determine it’s junk, and delete it. Mere seconds. But after more than 7,200 of those a year, would you consider changing the filters on your in-box?

We accept the level of rejections and warnings because we’ve become habituated to it. Our failed QC habits have been “boiling the frog” slowly, adding more and more warnings and outliers as our menu expanded, as our volume increased, as our vendors ignored (or profited from) the growing problem.

It’s helpful to go back to the introduction of the original 2s warning rule to see the source of our issue, and trace how it’s metastasized over the decades.

QC before warnings: the constant alarm

Before warning rules were introduced, the standard QC practice was to use the 1:2s rule as a rejection rule. That is, any control value falling outside 2 sd limits meant the run was out of control, the run must be stopped, results must not be reported, and the system needed to be fixed before resuming.

Nevertheless, it was well known that the 1:2s rule had a problem: high false rejection rates. Using 1:2s with two controls is expected to generate 9% false rejection, and with three controls, 14% false rejection. False rejection, to belatedly remind the reader, is a situation when there is nothing wrong with the method, there is just normal variation that has taken the control point past the 2 sd limit. These false rejection rates are available in standard statistical tables of the normal distribution (“small” point: our traditional control materials must take on a normal distribution in order to be useful, otherwise we cannot bring the tools, powers, and advantages of statistical quality control to bear).

The constant alarms were manageable when there was a small menu and small volume of testing. Back then, there was slack in the staffing, enough capacity to absorb the excessive warnings. But the unceasing alarms did give rise to worse practices: repeating controls, running new controls, repeating new controls, and so on, sometimes paired with frequent recalibration. Unnecessary warnings lead to unnecessary responses.

Enter Westgard Rules and the classic Warning Rule

A breakthrough occurred with the introduction of the multirule QC procedure approach by Westgard JO et al. By implementing what became known as “Westgard Rules”, labs switched from rejecting runs over 2 sd outliers to treating that common outlier as a warning – not a reason to reject the run, but the stimulus to start checking other rejection rules. With that, the false rejection rates of 9% to 14% were cut by more than half (Westgard Rules, depending on the number or rules and number of levels, operate with roughly 2-6% false rejection). The reduction in outliers was swift and palpable, and these benefits lead to rapid worldwide adoption of the rules (that the rules were FREE to all helped a lot).

There were two reasons why the 2s rule was turned into a warning rule:

- The 2 sd rule, even back in 1981, was deeply ingrained in the QC practices of laboratories. To ask labs to go “cold turkey” and completely ignore all 2 sd outliers would have been a big ask. Think of this as a bit of social engineering – for laboratory technologists in the habit of paying attention to 2 sd outliers, this was a gradual off-ramp. Yes, you can still watch the 2 sd limits, but just don’t reject everything past them.

- . In 1981, how was QC being interpreted? Not on a phone, pad, laptop, desktop, middleware, informatics, LIS, or some automated software. It was mostly done with graph paper and pen/pencils. And the interpretation engine was the technologist’s brain. Thus, requiring a technologist to interpret 5 different rules for every data point was a big ask. The warning rule served as cognitive workload balancing. Technologists didn’t need to check every data point with 5 rules. They waited until something exceeded the 2 sd limit (thus, only 1 rule to check per point for a majority of the QC), then check the other 5 rules. A lot less work for the technologist.

Fast forward a few decades: where is all the heavy lifting being done for Westgard Rules? In software. Do we worry about the cognitive workload on a computer? Do we need to give the CPU some rest? Hardly. Once we’ve gotten the rules automated in software, we can skip the warning and just go directly to the rejection rules. Why wait for a point to exceed 2 sd, when you can catch a 4:1s violation right away? (Think about this for a minute).

Warning Rules in an era of Westgard Sigma Rules

Modern Westgard Rules recommendations – particularly the Westgard Sigma Rules – do not recommend using 2 sd limits for warning or rejection. Abolish the 2 sd limits. Even if they are only being used as warnings, they are adding too much noise to your daily routine.

When we can characterize the quality of a method on the Six Sigma scale, it allows us to pare down the rejection rules. And we can then analyze objectively the benefits and costs of adding an additional rule that isn’t needed. The tool we’ll use is the Critical-Error graph, which allows us to see the error detection and false rejection of different control rules.

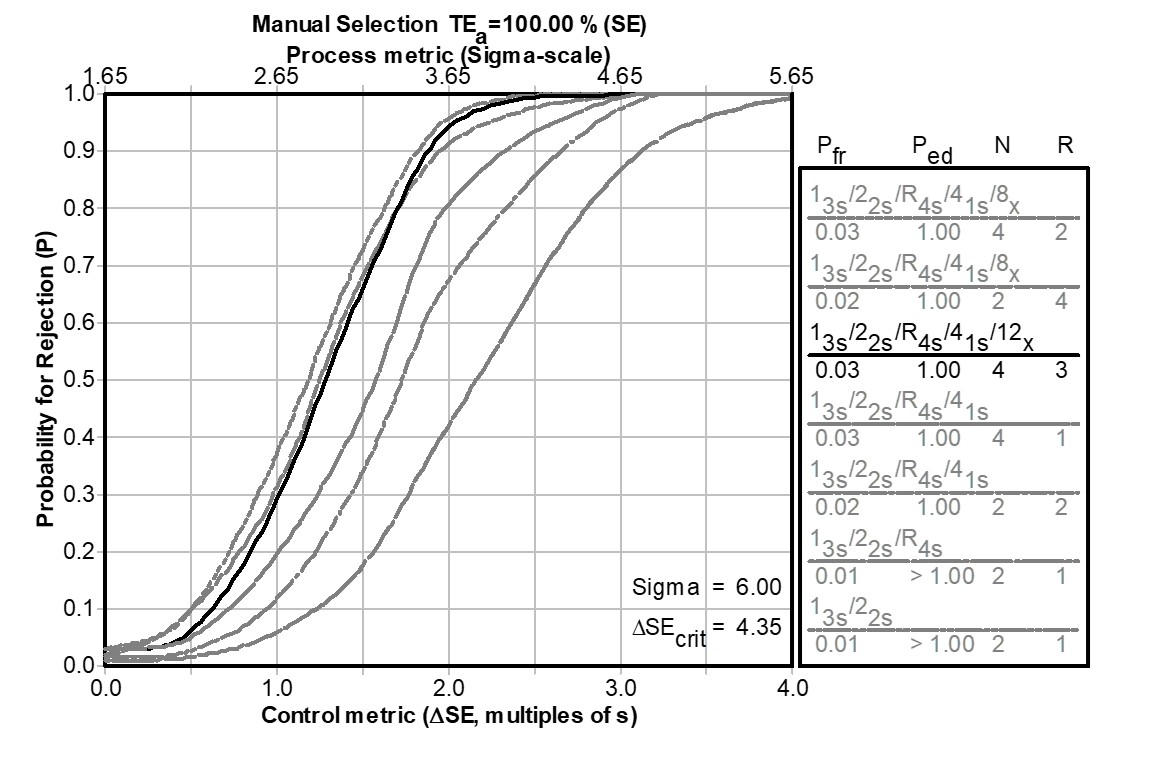

6 Sigma: Warnings don’t bring detection benefits

[1st graph]

[1st graph]

The performance is so good here it’s actually “off the chart.” At world class performance, only a 1:3s rule is needed to reject a run. That means the laboratory could potentially use any of the unused Westgard Rules (2:2s, 4:1s, 8:x for example) for warning rules. But the addition of these rules don’t really have any impact in detecting errors, error detection can't increase because essentially it's at the maximum. So the addition of these rules can only add more false rejection. For each additional rule above, roughly an additional 1% false rejection will be generated. So adding warning rules in a Six Sigma situation really just adds more noise.

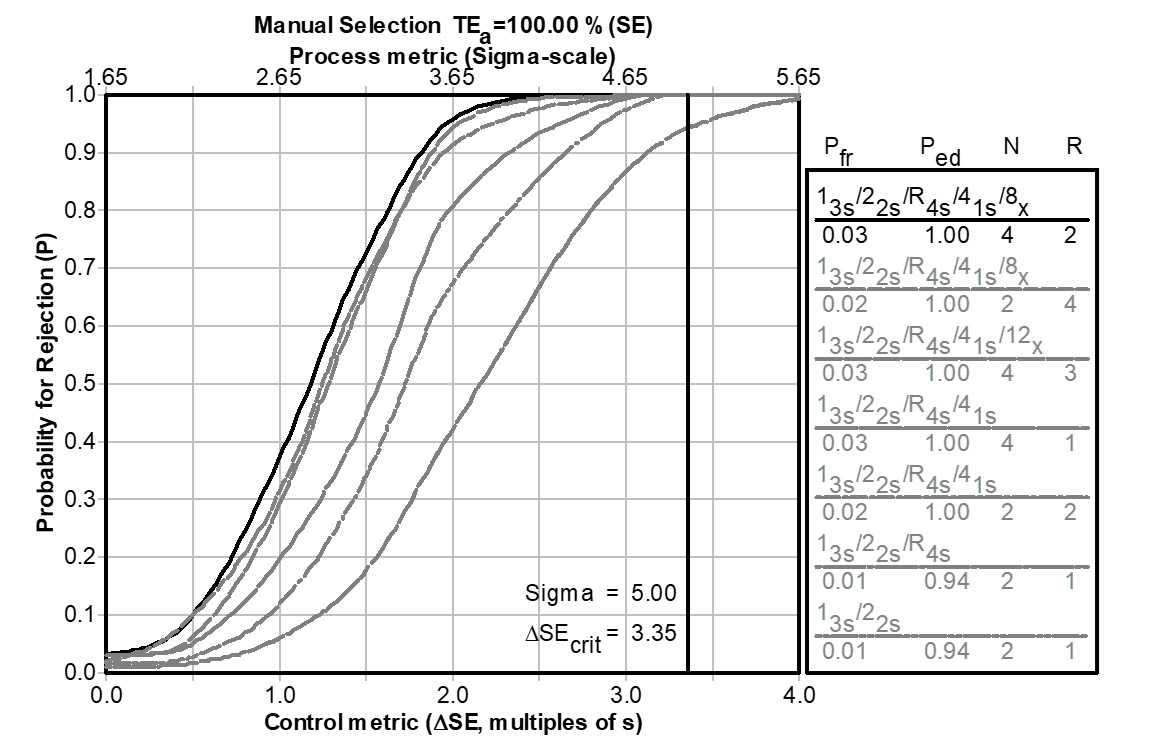

5 Sigma: Minor benefits of adding rules

At 5 Sigma, the performance is back on the chart. 1:3s/2:2s/R:4s is the recommended set of rejection rules. So the 4:1s and the 8:x could be used as warning rules. If the 4:1s rule is used as a warning rule, there is a small boost in error detection, about 6%, with an additional 1% more false rejection. Adding the 8:x after that as a warning rule would not boost the error detection, it would just add more false rejection. So there is a narrow case to be made for the 4:1s as a warning rule here.

4 Sigma: only one Warning Rule possible

At 4 Sigma, the required number of Westgard Sigma Rules is also 4: 1:3s/2:2s/R:4s/4:1s. That leaves only one rule that could be added as a possible warning rule, the 8:x rule. Adding it will gain 8% more error detection, at a cost of 1% more false rejection. So there’s a better case for using 8:x as a warning rule when Sigma is 4.

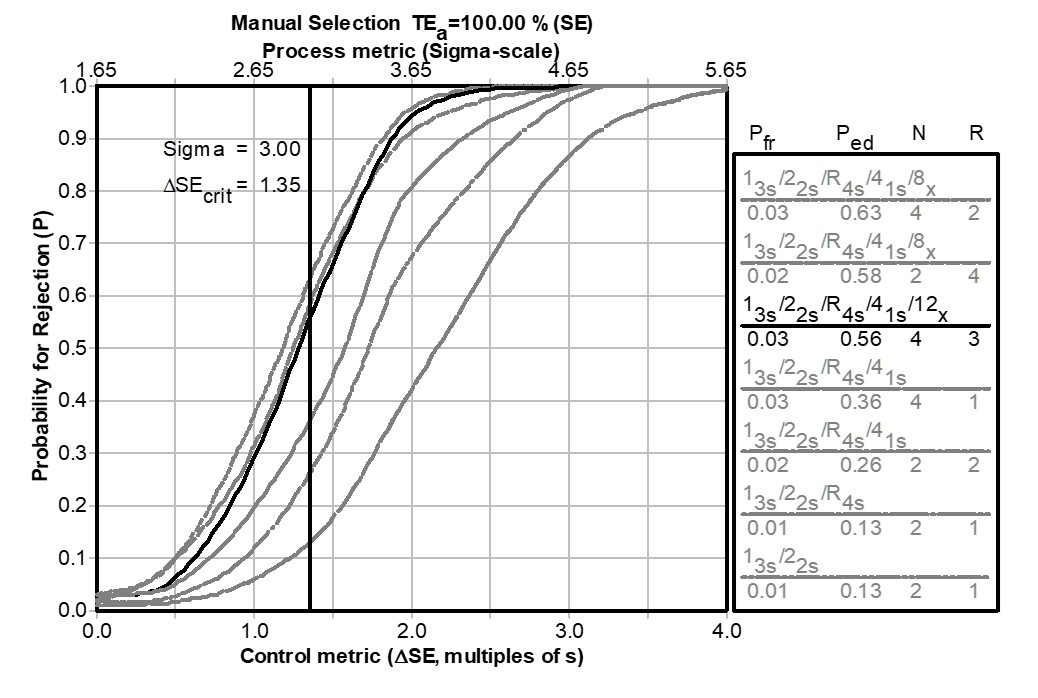

3 Sigma: All Rejection, no Warnings

Our last graph is when we run out of warning rules, because they’re all needed to serve as rejection rules. When the analytical Sigma metric is 3 , that’s considered the minimum acceptable performance, and the maximum number of Westgard Sigma Rules are then necessary: 1:3s/2:2s/R:4s/4:1s/8:x. Every rule is a rejection rule. There are no warnings.

For any assays falling below 3 Sigma, the same scenario plays out. All Westgard Sigma Rules are needed for error detection and no rules are left to be used as warning rules. [And for assays below 3 Sigma, all the Westgard Rules really aren’t enough, you should consider adding non-statistical techniques to help monitor a method that error-prone]

Please also note, the examples discussed the rules convenient for Ns of 2 and 4, but the same scenarios hold true for Ns of 3 and 6. If you're running 3 levels of control, you're considering 3:1s, 6:x, 9:x warning rules.

At the end of this analysis, we see there are narrow circumstances where using a 4:1s or an 8:x could be useful. But there are many other scenarios where the warning rule adds nothing by noise, or where warnings aren’t allowed because every rule reveals a true error that must be rejected.

Notice again that Westgard Sigma Rules do not include 2 sd limits, ever. Not for warning. Not for rejection. The 2 sd rule is so prone to false alarms, it is counter-productive to implement.

Learning to live with fewer alarms: are we running QC for error detection or therapy?

In a scenario where there are few rejection rules, some laboratories blanche. The culture shift, removing so many rules, leaves them feeling exposed. So they keep implementing some Westgard Rules, but now they use them as warning rules. Keeping the 4:1s rule in place for even a 6 Sigma method, and if it’s violated, perhaps that indicates there is a small problem – not critical yet – that could be investigated and even solved before it reaches the point of causing a run rejection.

The downside of this practice is that we’re right back where we started, with too many rules and too many outliers, many of them false alarms. If you want a very simplified rule of thumb, think of the addition of each of the Westgard Rules like the 2:2s, 4:1s, 8:x as adding about 1% more false rejection to your laboratory. So if you’re running QC every day of the year, you’re adding 3-4 false rejections per test with the addition of each warning rule. (If you keep the 1:2s rule in place, you’re back with 9% false rejection or more). Doesn’t seem like too much, but if you’ve applied warning rules everywhere, your typical lab with 60-80 tests on the menu is going to see close to 1 false rejection every day. If you’ve got multiple warning rules, you’re drowning yourself in alarms.

Leaning out your QC can feel risky, can make you worry about missing a true out-of-control event. But if you’re applying multiple warning rules when you don’t need to, you’re not running statistical QC, you’re running therapeutic QC. It’s QC to help you relax and sleep at night. We can’t produce mathematics to help you justify that practice.

If you’re uncomfortable reducing your rules or eliminating your warnings, spend some time reviewing all of those false rejections. What is the signal to noise ratio? How many false rejections are acceptable in order to catch one real error?

One last sign to wean off warning rules

They don’t give awards for running too much QC, applying too many rules, or repeating too many controls. (If there was an award for running lots of Westgard Rules, believe us, we’d tell you about it). But I bet you don’t go home at the end of your shift and boast to your partner or spouse or family member about the false alarms you dealt with that day. “Today I chased the ghosts of 4 QC outliers and after a lot of work, it turned out it was all for nothing.” When you think of all the warnings and repeats, you’re not proud, you’re not likely to include those details when you talk to others, except in a way that admits failure.

When we advertise for new technologists for our laboratories, we don’t include “must be proficient in repeating controls multiple times” and “must be skilled in implementing rules that generate high false alarms” in our job description. There’s a reason for that.

To reiterate: with Westgard Sigma Rules, the number of rules you need as rejection rules goes down as your analytical Sigma-metric goes up. The better your method, the fewer rules you need, for both rejection and warning. A Six Sigma method, delivering world class quality, doesn’t need Westgard Rules (in the sense of a need for multiple rules – you only need one rule). Setting your limits at 3 sd (1:3s) is sufficient for error detection. Any violations of 2:2s, R:4s, 4:1s, 8:x, etc. do not represent medically important errors. They might be statistically interesting, but they are not reason to reject the run. As the analytical Sigma metric of the method goes down, more rules are needed for error detection, and there are fewer choices of rules to turn into warning rules. At a certain point, every rule violation is a real fire, there are no warnings anymore. Thus, there are a limited number of scenarios where adding a warning rule makes sense. The blanket use of warning rules on all tests is highly discouraged.

Here’s your final warning: stop using so many warning rules.